Your AI Controls Don’t Govern the Data That Feeds Them; What K26 Proved

ServiceNow Knowledge 2026 closed its doors in Las Vegas on May 7th. The announcements were ambitious. The architecture was impressive. And hidden in the expo theater sessions was the most honest thing anyone said all week.

I was on the floor for all three days. I sat through the keynotes. I attended the governance roadmap sessions.

I watched the Vibe Code Lounge fill up with developers building agentic applications in minutes. And I took notes on what the practitioners said when the main-stage cameras were not on them.

Here is what I heard.

What ServiceNow Announced

The K26 narrative was built around a single architectural frame: Sense.

Decide. Act. Secure.

ServiceNow introduced RaptorDB, a purpose-built AI database designed for the velocity and volume that agentic workloads generate at enterprise scale. They positioned Otto as the autonomous agent layer operating above it. And they called the AI Control Tower something worth paying attention to: the operating system for enterprise AI governance.

The Sense/Decide/Act/Secure framework is real and it is well-constructed. The AI Control Tower connects models, clouds, interfaces, data, and systems. Bill McDermott called ServiceNow the AI agent of agents. Jensen Huang joined him on stage to describe the autonomous enterprise. The vision is credible.

But every one of those announcements shares the same architectural assumption. The data arriving in the system is already clean, already structured, already governed.

That assumption is not a gap in their thinking. It is a gap in the stack.

What the Practitioners Said

The sessions that stayed with me were not on the main stage.

An Everest Group presenter walked through what it took to build a unified service health command center across 1,500 services. He spent significant time on data quality. The key point on his slide was bolded.

He said it twice from the stage: “Data quality: Clean data became the foundation for everything that followed.”

His three-step path to service-level operational visibility began with a column labeled Clean Foundation before anything else could be built. CMDB made authoritative. Service mapping governed. CSDM alignment established. Only after the foundation was clean could the signals be unified and the workspace configured.

A Veza architect presenting on non-human identity governance explained why they kept their detection layer structurally separate from ServiceNow’s governance layer.

The quote was direct: “We deliberately kept it separate because this allowed our architecture to scale.” Veza sees it. ServiceNow governs it. IAM provisions it.

Three distinct layers by design, not by accident.

Both practitioners said the same thing in different contexts. Governance requires layers. No single platform can own all of them. And the foundation layer has to be clean before anything above it can be trusted.

The Problem RaptorDB Does Not Solve

RaptorDB is a meaningful announcement. Purpose-built storage for AI workloads addresses a real infrastructure constraint. When agentic systems are processing thousands of decisions per hour, the database layer matters. But faster storage of unstructured, PII-laden, semantically inconsistent data is not a governance achievement. It is a liability that scales.

Enterprise AI today receives data from multiple systems with inconsistent schemas, residual PII in outputs even after redaction attempts, and normalization costs that multiply across every platform consuming the same data. The current baseline: 15 percent residual PII in redacted outputs, 3x normalization cost per AI platform, and a 40 percent auto-processing ceiling with legacy tools.

Those numbers do not improve because RaptorDB retrieves the data faster. They improve when the data is governed before it enters the system.

Where StrataLayer Lives in the Stack

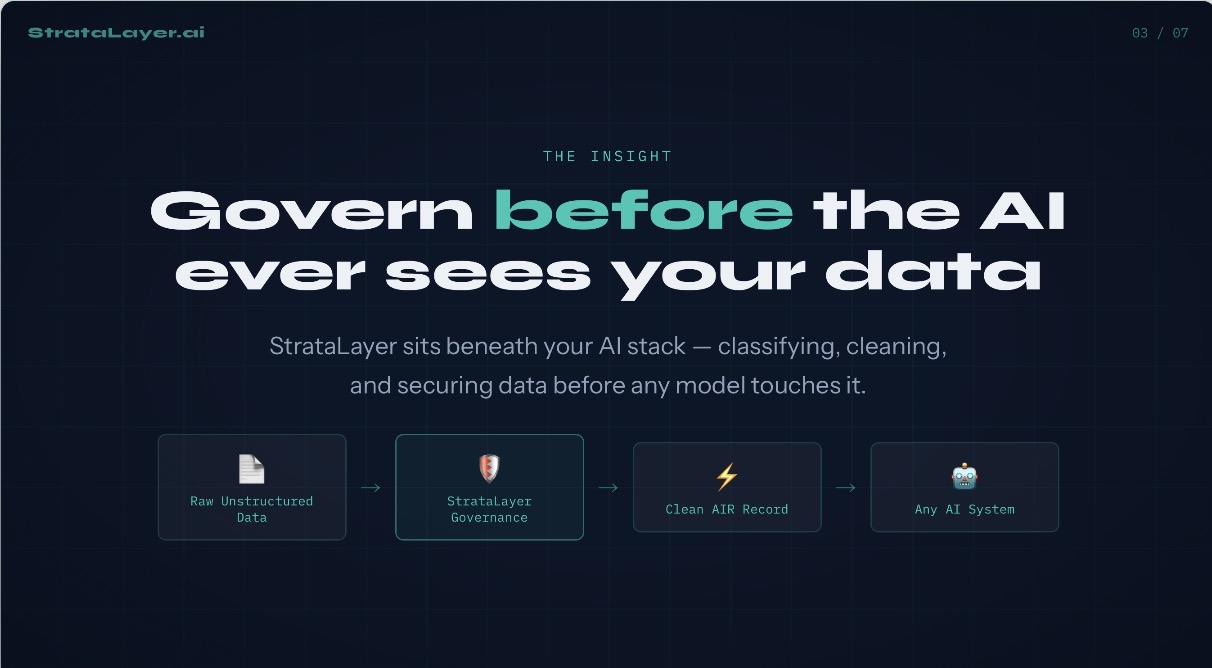

ServiceNow called the AI Control Tower the operating system for enterprise AI governance. That framing is useful because it tells you exactly where StrataLayer lives.

Every operating system assumes a hardware layer beneath it. The OS does not provision the BIOS. The OS does not govern what arrives at the hardware boundary. It operates on what the substrate provides.

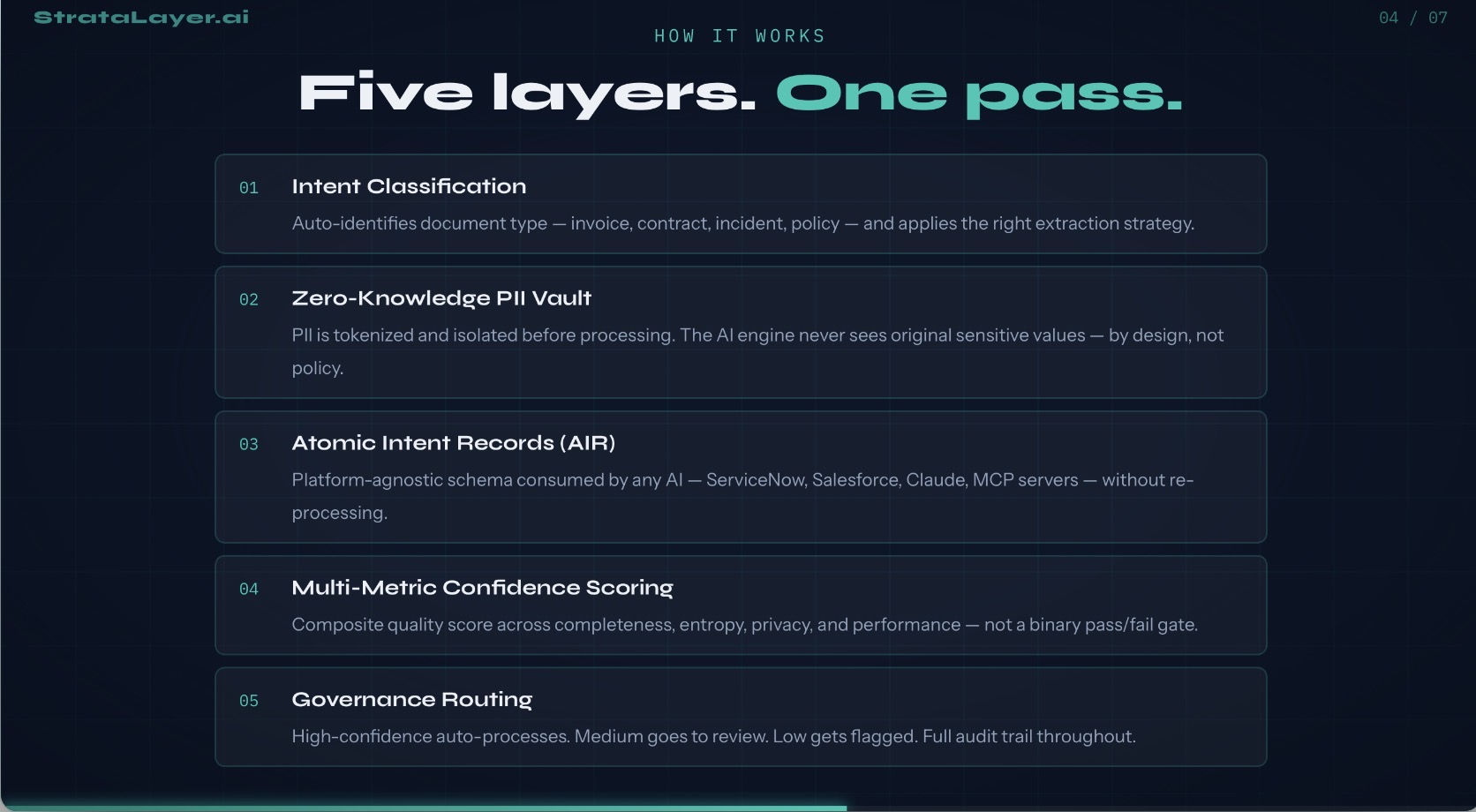

StrataLayer is pre-processing governance for AI. It sits beneath the AI stack, classifying, cleaning, and securing data before any model touches it. Raw enterprise data enters. StrataLayer applies five layers in a single pass: Intent Classification, Zero-Knowledge PII Vault, Semantic Normalization, Multi-Metric Confidence Scoring, and Governance Routing.

What exits is a clean Atomic Intent Record, a platform-agnostic schema that any AI system; ServiceNow, Salesforce, Claude, MCP servers; can consume without re-processing.

The measured results from production testing on 1,000 enterprise invoices: 0 percent PII exposure during processing, 67 percent reduction in multi-system processing cost, 67 percent auto-processing rate against a 40 percent baseline, 1.2ms average processing time, and 30 to 50 percent data size reduction on every run.

That last number is the one that connects directly to RaptorDB. Smaller, cleaner, structured records entering a purpose-built AI database means lower storage costs, faster retrieval, and more reliable agent decisions. StrataLayer does not compete with RaptorDB. It determines the quality of what RaptorDB holds.

Otto operating as an autonomous agent is only as reliable as the data it consumes. Agentic AI acting at speed on ungoverned data is not a capability problem. It is an enterprise liability.

The AIR record — the Atomic Intent Record produced by StrataLayer — gives Otto, the AI Control Tower, and every downstream agent something defensible to act on.

Not redaction theater applied after the fact. Audit trail from the first byte. Compliance evidence built in before the model ever makes a decision.

ServiceNow’s framework says the Sense layer governs data.

Sense → Think → Act.

What it does not address is what happens to the data before it enters the Sense layer.

That is not an oversight. That is the gap StrataLayer fills.

The Line That Closed the Conference

“2026 is not about experimentation anymore.”

That was said from the main stage.

It was true in 2024. It is overdue in 2026.

If this is the year of execution, then ungoverned data pipelines are no longer a planning problem. They are an operational liability regulators, auditors, and boards are already asking about.

Colorado SB 24-205 is law. The EU AI Act is enforced. Regulators do not wait for your platform roadmap.

StrataLayer is not a response to a problem that has already happened.

It is the condition you establish before the problem has a name.

Governed AI before you need it.

Start With the 42-Day Assessment

The 42-Day StrataLayer Assessment maps your data governance posture against the pre-processing model and identifies exactly where your AI stack is exposed.

Not where it may be exposed someday.

Where it is exposed right now, before your next agent deployment, before your next audit, before your next incident.

James Naphen is the Founder and CPO of EcoStratus Technologies and StrataLayer.ai, a patent-pending pre-processing governance platform for enterprise AI.

He publishes at sncdevelopment.com and writes the 30-Second Perspectives series on LinkedIn.

Leave a comment