30-Second Perspectives

A 30-Second Perspective on pre-processing governance and the gap at the bottom of the enterprise AI stack.

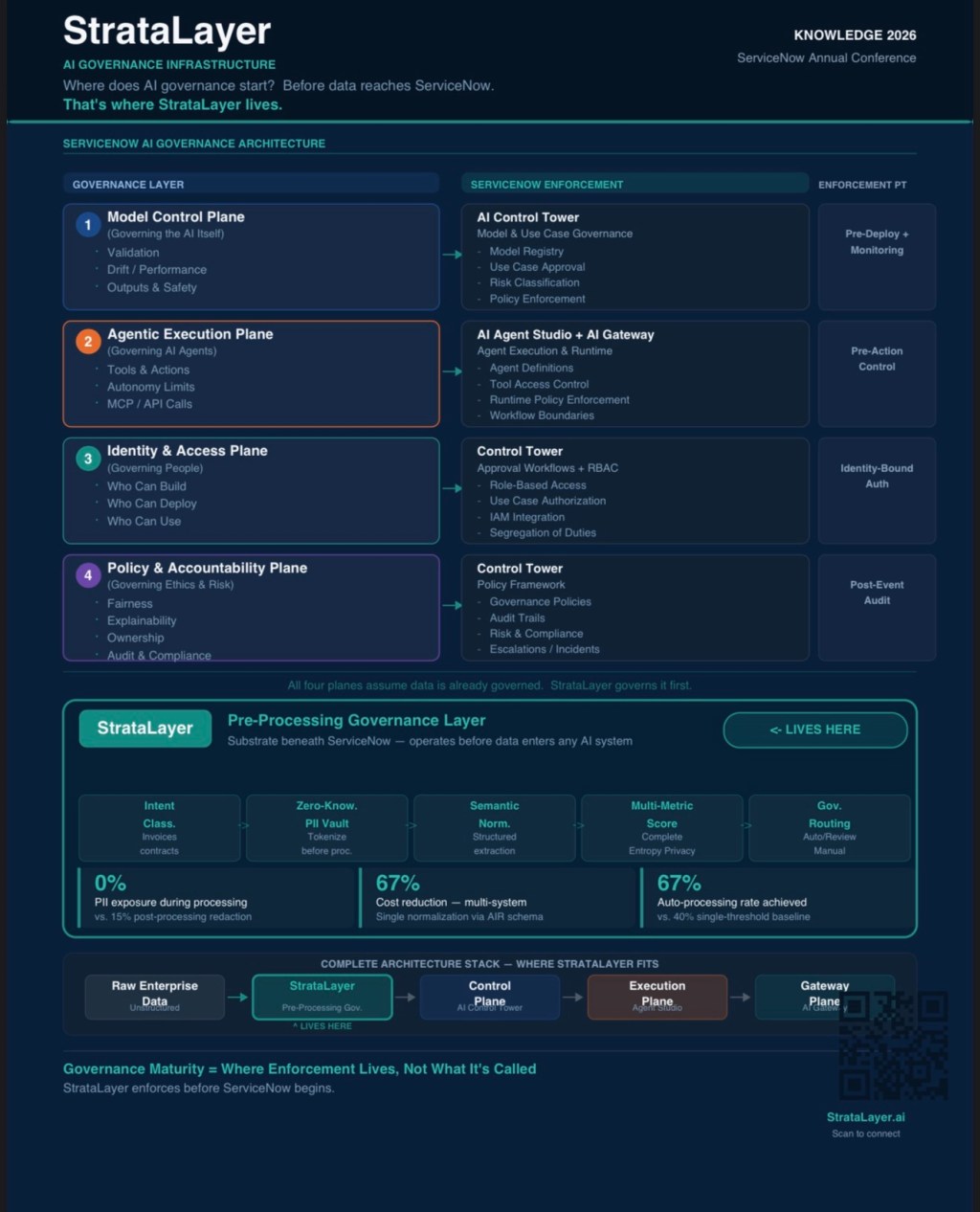

At Knowledge 2026 this week, ServiceNow is demonstrating what a mature AI governance architecture looks like inside the platform. Four enforcement planes, clearly defined. Model governance, agentic execution, identity and access, policy and accountability, each mapped to a specific ServiceNow enforcement point, from AI Control Tower through AI Gateway.

It is a coherent framework. The problem is not the framework. The problem is where it starts.

The Assumption Embedded in Every Control Plane

Every governance layer in the ServiceNow architecture, and in most enterprise AI governance frameworks, begins with an implicit assumption: the data entering the system is ready to be governed. It has already been normalized. PII has already been handled. Semantic consistency has already been established across source systems.

In practice, that assumption is false before it is tested.

Enterprise data does not arrive in a governable state. An invoice processed by three departments carries three different representations of the same vendor identifier. A customer record exported from CRM contains personally identifiable information woven into the business context because no one separated them at the source. A service desk incident arrives with freeform text that references systems, individuals, and sensitive operational details in the same unstructured field. Your model control plane does not see that problem. It receives what the data pipeline delivers. Your agentic execution controls enforce what actions agents can take, but they take those actions on whatever data reached them. Your policy and accountability layer audits outcomes. It cannot audit what happened to PII during a processing step that occurred upstream of every enforcement point in your stack.

The Gap Is Architectural, Not Procedural

This is not a training problem or a policy awareness problem. It is an architectural problem, and it has a specific location: the boundary between raw enterprise data and the first AI system that touches it.

Traditional approaches treat this boundary as a data engineering concern. ETL pipelines clean and transform. Post-processing redaction removes sensitive content from outputs. These approaches share the same fundamental limitation: they operate after data has been processed, which means exposure has already occurred before remediation begins. Measurements of post-processing redaction systems consistently show residual PII in supposedly cleaned outputs, not because the tools are poorly designed, but because the architecture guarantees exposure before the tools run.

The only way to achieve zero PII exposure during AI processing is to ensure the processing engine never receives original PII values. That is not a redaction problem. It is a vault architecture problem. It requires separating sensitive data storage from processing logic at the network level, before any AI system ingests the data, with cryptographic separation that makes access architecturally impossible rather than just procedurally prohibited.

That capability does not live inside ServiceNow. It lives below it.

Where StrataLayer Fits in the Stack

StrataLayer operates as a pre-processing governance substrate beneath AI orchestration systems. Before unstructured enterprise data reaches any AI system, before ServiceNow’s control planes, before any agent, before any model, it passes through a classification and normalization layer that performs five functions: intent classification by document type, zero-knowledge PII tokenization with vault separation, semantic normalization into platform-agnostic Atomic Intent Records, multi-metric confidence scoring, and governance routing based on data quality thresholds.

The output is clean, governed, privacy-safe data that ServiceNow’s enforcement architecture receives in the state its governance layers assume, rather than the state in which raw enterprise data actually arrives.

This is not a replacement for the ServiceNow AI governance framework. It is the substrate that makes the framework’s assumptions valid. Control planes govern agents. Execution planes enforce action boundaries. Identity planes manage authorization. Policy planes maintain accountability. StrataLayer governs what all of those planes receive before they have a decision to make.

The Question Worth Asking at Knowledge 2026

Enterprise AI programs attending this week’s conference will spend considerable time mapping governance layers, enforcement points, and control mechanisms inside ServiceNow.

That work is valuable and necessary.

The more clarifying question is one layer below it:

What does your data look like before it reaches any of those layers?

Has PII been architecturally separated from your processing engines, or is it present in memory during normalization?

Are semantic inconsistencies resolved before AI ingestion, or are your models processing the same business concept under three different field names depending on the source system?

Is your confidence in data quality a function of measurement or assumption?

Governance maturity is not measured by the sophistication of your control planes. It is measured by what your data looks like before any of those planes see it.

Leadership Question: Your organization has invested in AI governance tooling at the orchestration and agent layer.

Can you describe, with specificity, what governance is applied to your enterprise data in the 1,200 milliseconds before it reaches any of those tools?

This is a 30-Second Perspective from EcoStratus Technologies. StrataLayer is a pre-processing governance assessment and implementation offering for enterprise AI programs building on ServiceNow and multi-platform AI infrastructure. The StrataLayer 42-Day Assessment identifies pre-ingestion governance gaps and delivers an architectural remediation roadmap. Learn more at StrataLayer.ai.

Leave a comment