30-Second Perspectives — Agentic AI

The more autonomous the agent, the harder the constraints need to be.

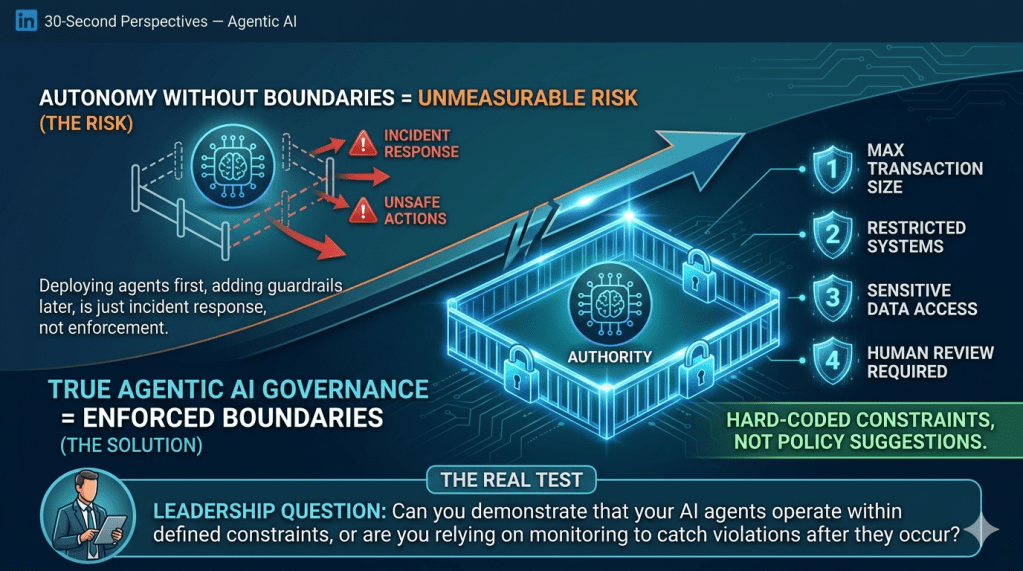

Autonomy without boundaries isn’t freedom. It’s risk you can’t measure.

Most organizations approach this backward. They deploy the agent, monitor for problems, and add guardrails after something goes wrong. That’s not governance. That’s incident response.

Boundaries First, Autonomy Second

Governance for agentic AI starts with defining what the agent cannot do—not what it can.

Maximum financial transaction size. Systems it can’t modify. Data it can’t access. Decisions that require human confirmation. Actions prohibited entirely.

These constraints aren’t suggestions in a policy doc. They’re code. Hard stops that execute before the agent acts.

Policy-as-Code, Not Code-as-Policy

Traditional governance writes policies and hopes systems comply. Agentic governance encodes the policy into the system architecture.

The agent can’t exceed its spending authority because the financial system won’t process transactions above its threshold. It can’t access restricted data because the access control system denies the request. It can’t interpret ambiguous policy because those decisions route to a human.

Compliance isn’t monitored. It’s enforced.

What This Requires Technically

Every agent needs three things:

A defined scope of authority—what decisions it can make autonomously. A set of hard constraints—actions it cannot take under any circumstance. Clear escalation logic—when uncertainty triggers human review.

This isn’t a feature request. This is the operating requirement.

The Real Test

Governance maturity for agentic AI isn’t measured by how well you document intent. It’s measured by how effectively you prevent unauthorized action.

If your agent can act outside its defined authority, your governance model is documentation, not enforcement.

The goal isn’t to trust the agent. The goal is to build a system where trust isn’t required—because the boundaries are structural, not behavioral.

Leadership Question

Can you demonstrate that your AI agents operate within defined constraints, or are you relying on monitoring to catch violations after they occur?

Leave a comment