30-Second Perspectives — Zero-Knowledge

Your AI governance policy assumes data is movable. It’s not.

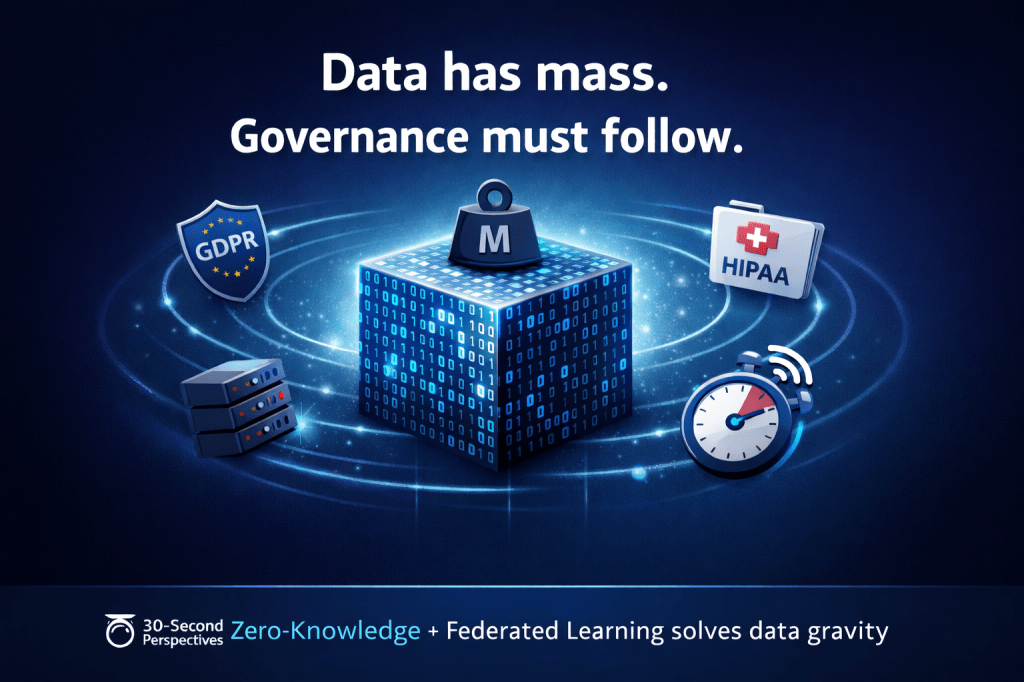

Data has mass. Mass creates gravity. Gravity determines where computation happens.

This is not a metaphor. It’s the operational constraint every AI team ignores until it’s too late.

Why Data Doesn’t Move

Regulations create legal gravity (GDPR, HIPAA, FedRAMP). Scale creates technical gravity (moving petabytes costs more than moving compute). Latency creates performance gravity (real-time AI can’t wait for cross-region data transfers).

Leaders treat this as an infrastructure problem. It’s a governance problem.

When data can’t move, governance must follow the data—not the other way around.

The Zero-Knowledge Solution

Secure multi-party computation and federated learning let models train on data without the data ever leaving its jurisdiction. Zero-knowledge proofs let you verify the model learned from compliant data without auditing the dataset itself.

This is how you govern AI when data sovereignty is enforced, not optional.

What This Means for Leaders

If your governance model requires centralizing data for oversight, you’re designing for a world that no longer exists. Distributed data requires distributed governance, with cryptographic verification at the edges.

Leadership Question

If your most sensitive data can’t legally leave its current location, how do you govern an AI model trained on it?

What Comes Next

In the next perspective, we’ll examine how zero-knowledge transforms audit trails—making AI decisions verifiable without making them visible.